Cloud and Infrastructure

What this category covers

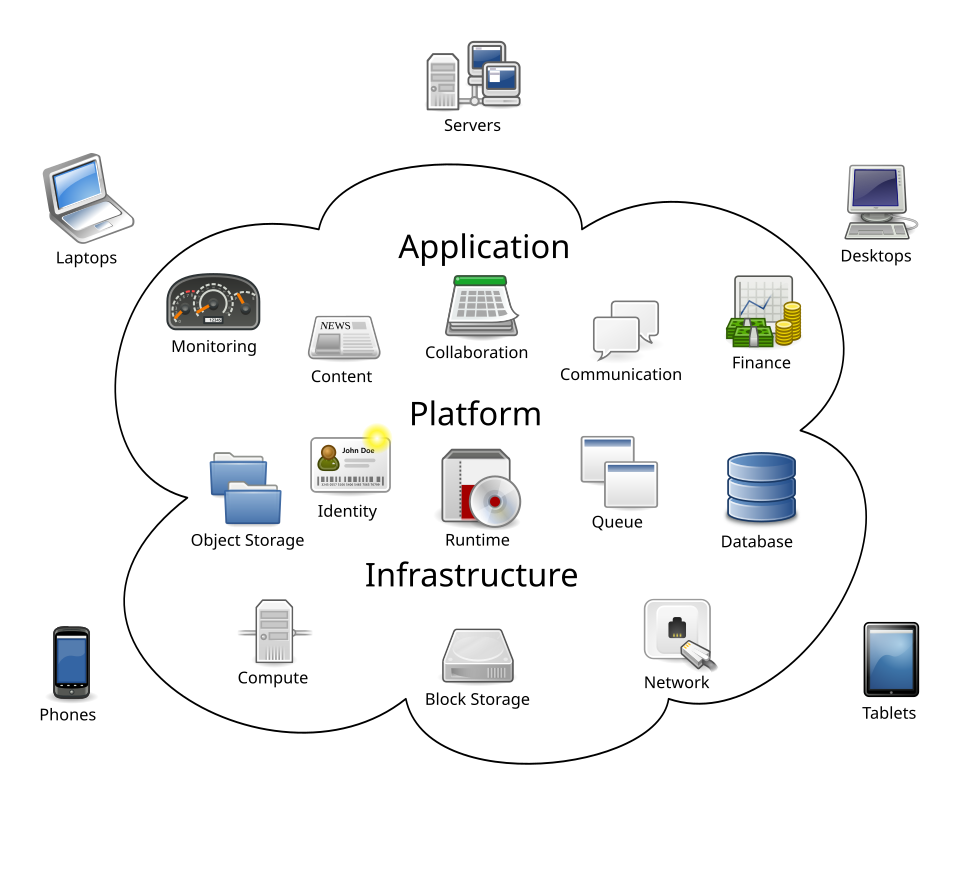

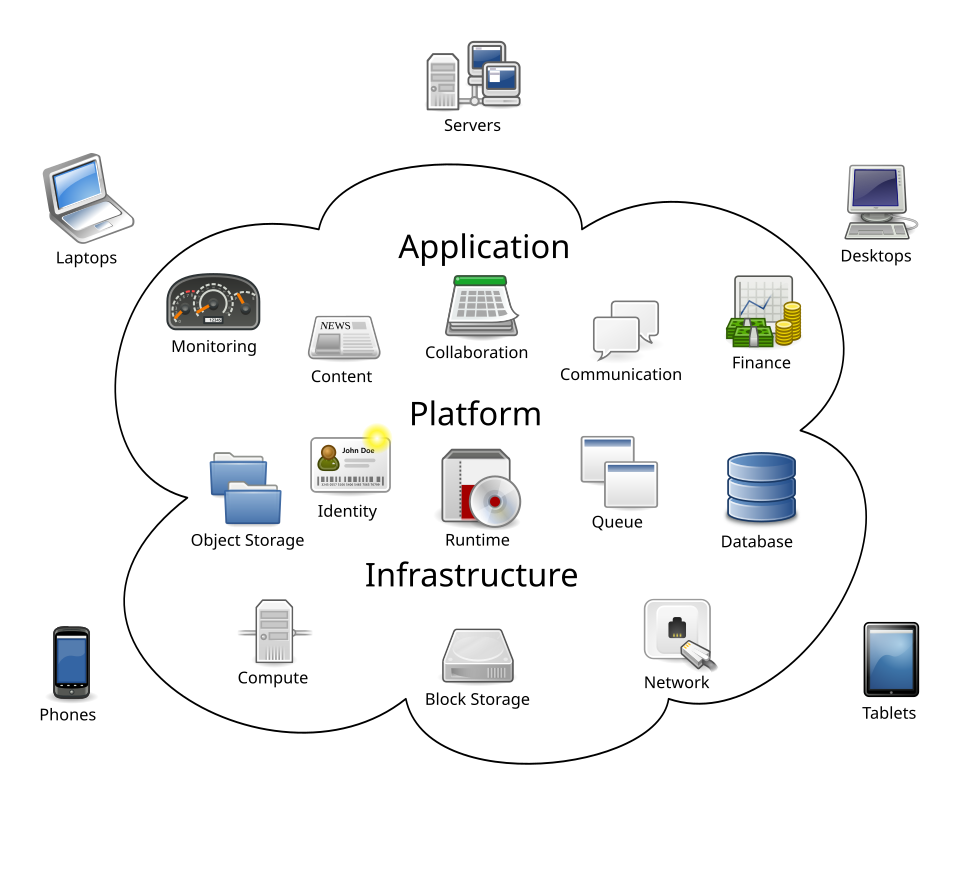

Cloud and infrastructure is the heart of how modern software delivers reliability at scale. Our coverage here examines the layers from core compute and networking to orchestration, storage strategies, and cost-aware architectures. We focus on practical research translations, real-world patterns, and decision points that teams face when building and operating modern systems. The goal is to connect theory with practice through clear, citation-anchored explainers and real-world examples.

Within this category you will encounter several topic clusters and sub-areas. In this orientation we highlight: distributed systems design, serverless and event-driven architectures, multi-cloud and hybrid environments, edge computing and runtimes, observability and SRE practices, and cost optimization and capacity planning. We translate primary sources, provide context for architectural choices, and compare approaches with concrete tradeoffs. This page also reflects how infrastructure choices influence developer productivity, security posture, and customer experience.

Readers will see a mix of research-to-practice explainers, implementation notes, and critical evaluations of emerging tools. Expect analyses that connect throughput, latency, and reliability to concrete configuration options and pricing. We name real-world constraints, from regulatory considerations to vendor differences, so that decisions are grounded in what actually matters in production environments.

What’s already on the page

Recent top posts in this section reveal the range of coverage. They include cross-cutting concerns like tracing across heterogeneous systems, understanding serverless cold starts, and orchestrating edge workloads with container runtimes. We also explore cost-aware patterns for multi-cloud deployments and benchmarking studies to compare cold-start performance across platforms. These pieces illustrate a practical thread: balance performance, cost, and operations complexity when choosing a cloud and infrastructure strategy.

Clustered topics you’ll encounter

- Distributed transactions and data consistency in multi-region setups with event sourcing and idempotency concerns.

- Serverless architectures including function cold-start behavior, pricing models, and portability considerations.

- Edge computing strategies, runtimes, and how to manage latency boundaries at the network edge.

- Orchestration and workflow with Kubernetes, container runtimes, and sidecar patterns.

- Observability across microservices, including tracing, metrics, and structured logging for fault isolation.

- Cost management in cloud-native designs, with benchmarking and architectural patterns for efficient spend.

Concrete country-specific context

Across this category we speak to a broad international audience while acknowledging practical realities in the United States and elsewhere. For example, in the US market many teams rely on major cloud providers like Amazon Web Services, Microsoft Azure, and Google Cloud Platform, with typical monthly budgets varying from $1,000 to $100,000 for production workloads depending on scale. In the enterprise arena, price sensitivity often hinges on reserved instances, savings plans, and enterprisewide migration journeys. Vendors and regulatory environments differ by region, but the core considerations—availability zones, data residency, and incident response—remain central to architecture choices.

Local ecosystems influence tooling and workflows as well. In NA, common internet service providers for enterprise connectivity include Comcast Business, AT&T, and Charter; in cloud networking, private links and VPNs are used to connect on-prem data centers with cloud VPCs. In financial and healthcare contexts, privacy and breach notification requirements shape logging retention and access control policies. We surface these realities where relevant to illustrate how architectural decisions play out in real organizations.

Comparative landscape: cloud plans and services

| Platform | Pay-as-you-go starting price | Primary strengths | Notable regional considerations |

|---|---|---|---|

| AWS | Compute options from $0.0000167 per second for Lambda executions; EC2 t3.micro from $0.0104/hour | Extensive services, global reach, mature tooling | Complex pricing, data residency options vary by region |

| Azure | Functions from $0.000016/GB-s; VMs from $0.008/hour | Strong enterprise integrations, hybrid capabilities | Pricing and regional quotas require careful planning |

| Google Cloud | Functions from $0.40 per million invocations; Compute Engine from $0.010 per hour | Data analytics integrations, network performance | Less mature in some regions, but strong lead in AI/ML |

What this means for readers

We present the core ideas in a way that helps technical decision-makers compare options, justify tradeoffs, and plan incremental changes. Our pieces connect the dots between infrastructure design, operational reliability, and cost efficiency, while anchoring conclusions in primary sources and real-world data. We avoid hype and focus on actionable, explainable content that teams can adapt to their environments.

For practitioners, the takeaway is clear: choose architectures that reduce toil, enable observable systems, and scale with predictable cost. For researchers and students, the category offers a window into how innovations in concurrency, networking, and automation translate into tangible improvements in production. And for managers, the category provides a framework to discuss tradeoffs with engineering teams, informed by concrete pricing, service capabilities, and regional considerations.

In this space, you will see hands-on explorations of how container runtimes interact with edge devices, how cold-start dynamics influence user experience, and how multi-cloud patterns can cushion against single-provider constraints. We strive to present the most relevant, current thinking without sacrificing clarity or rigor.

As you browse, you will encounter concrete examples named by name—such as Tracing distributed transactions, Understanding serverless cold starts, Edge computing orchestration, and Cost-aware architectural patterns—reflecting the practical focus of our editorial collective. This page is designed to orient you to what kind of content lives here and how it ties into the broader programming and IT operations landscape.

Cloud & Infrastructure

Tracing distributed transactions across heterogeneous systems

Distributed transactions across heterogeneous systems pose a perennial challenge for reliability and observability. This piece assesses practical end-to-en…

Understanding serverless cold starts in production environments

As serverless architectures proliferate across production systems, understanding cold starts remains essential for engineers balancing latency budgets and …

Edge computing orchestration with container runtimes

As edge computing ecosystems grow more capable yet resource-constrained, orchestrating container runtimes at the network’s edge becomes not just a matter o…