Data & Databases

What this category covers

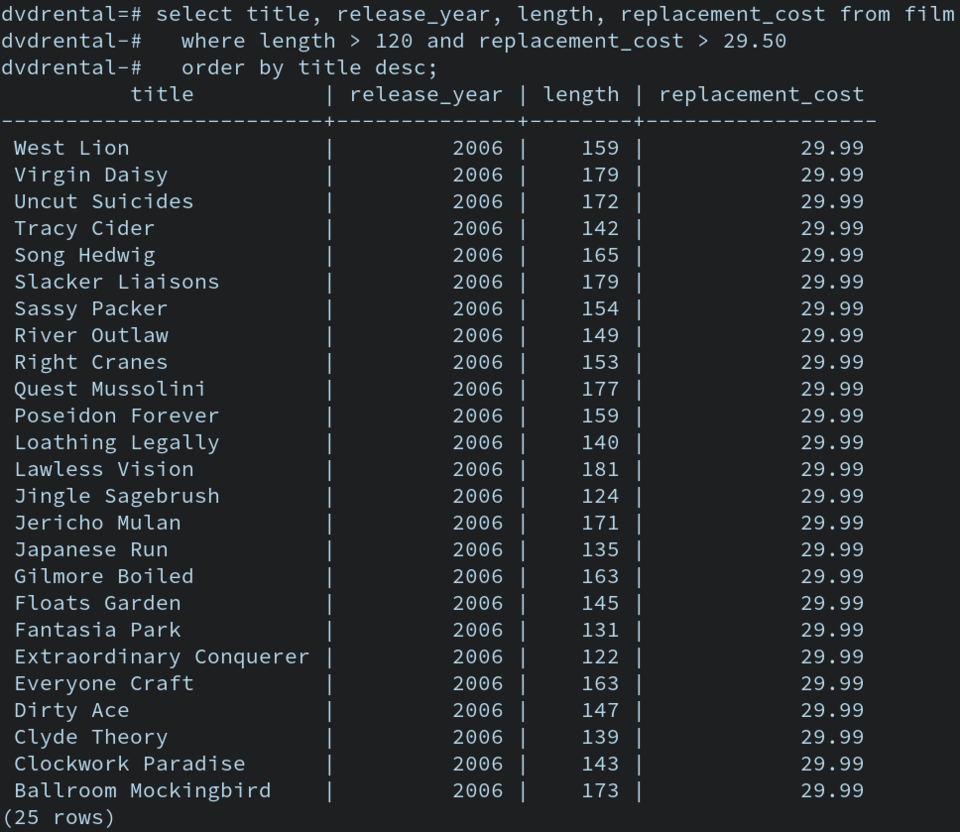

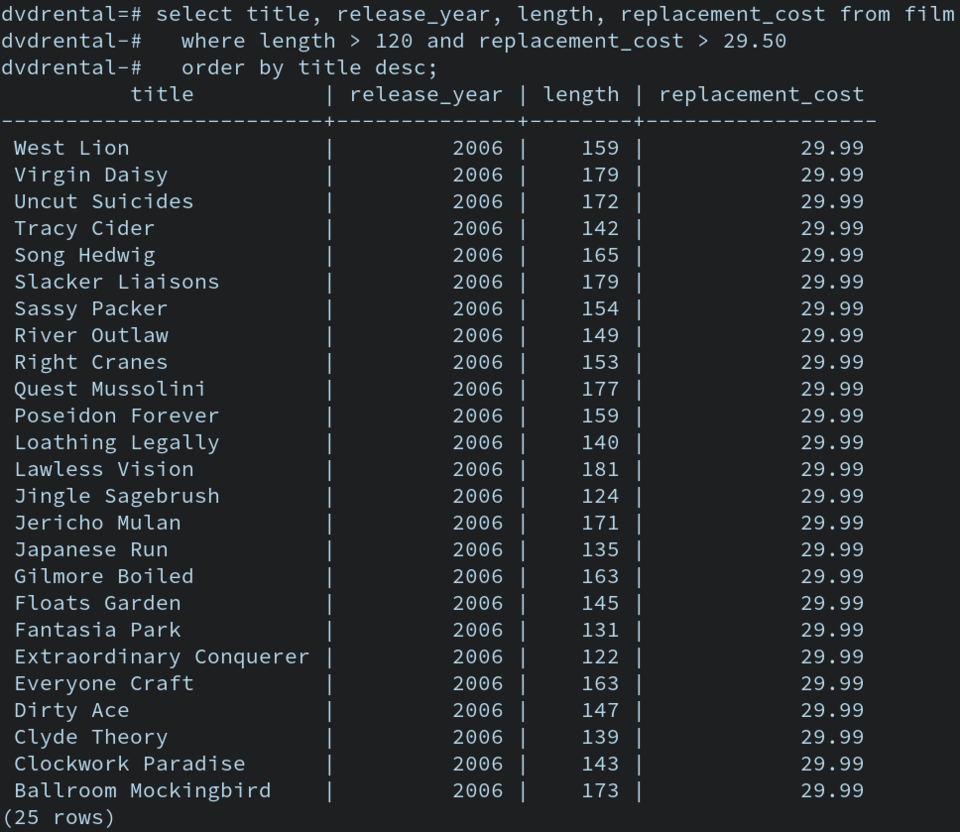

Data & Databases is where we map practical concerns around storage, retrieval, and analysis of information in modern software systems. This space is about how data moves, how it is organized, and how decisions get shaped by the choices we make in databases and data platforms. Evidence of the demand is clear in our top posts: Query optimization strategies for wide column stores, Cache invalidation strategies for web scale apps, Evaluating database isolation levels for modern workloads, Streaming data pipelines with exactly-once semantics, Data governance frameworks for research data, and Data archival strategies for rapid retrieval needs. Beyond those, we cover real-world tradeoffs, from consistency models to archival schemas, and from streaming glue code to governance policies.

In this section you will find several clusters of topic areas, including 1) data modeling and schema design for scale, 2) query performance and optimization across SQL and NoSQL engines, 3) data streaming and event-driven architectures, 4) data governance, privacy, and lifecycle management, 5) storage architectures and durability best practices, and 6) operational considerations for databases in production. Each cluster reflects concrete, hands-on concerns that engineers, researchers, and operators encounter when building robust data systems.

What you’ll see here

We bring plain-language explanations of complex ideas, anchored in primary sources and industry practice. Expect concrete numbers, practical benchmarks, and real-world anecdotes. Our focus is less on theory and more on what you can implement this week to improve reliability, speed, and control over data. We translate vendor documentation, research papers, and engineering blogs into clear, actionable guidance.

How this page is organized

This orientation page curates the most relevant threads and shows how our coverage is evolving. You’ll find short summaries linking to deeper discussions, plus quick comparisons to help you decide what approach fits your needs. The goal is to help you choose a data stack that aligns with your workload, budget, and governance requirements.

Concrete, country-specific context you’ll encounter

We anchor discussions with practical considerations that vary by environment. For example, in the United States and other markets, cloud costs often hinge on egress fees and storage classes in providers such as Amazon S3, Azure Blob Storage, or Google Cloud Storage, with typical data-transfer charges ranging from $0.01 to $0.09 per GB depending on region and tier. In corporate settings, data governance frameworks increasingly reference standards like HIPAA in healthcare or CFAA-aligned data access controls, shaping how teams implement auditing and retention. In higher education and research labs, data stewardship policies emphasize reproducibility and citation-ready datasets, often using metadata schemas compatible with Dublin Core or DataCite identifiers.

Regional usage patterns also touch on payment methods for cloud services (credit cards, vendor accounts, and procurement via purchase orders in universities), privacy laws such as state-level regulations that influence data handling, and local networks where high-throughput pipelines contend with ISP-level latency or peering arrangements. We name cities and regions to illustrate scale, like Seattle and New York as hubs for data engineering talent and operations, San Francisco Bay Area as a center for startup experimentation, and Chicago and Austin as growing nodes for data-centric infrastructure teams.

Section at a glance: what’s hot here

- Query optimization across wide column stores and hybrid transactional/analytical processing (HTAP) patterns.

- Cache strategies and invalidation schemes for multi-tier architectures with CDN and edge components.

- Isolation levels and consistency tradeoffs under modern workloads, including SI, Read Committed, and Serializable configurations.

- Streaming pipelines with exactly-once semantics and idempotent sinks.

- Data governance frameworks that address provenance, metadata, and access control for research data.

- Archival and retrieval strategies for cold data, including tiered storage and cold-cache designs.

Comparison at a glance

| Category | Representative Technologies | Typical Use Case | Price Range (USD) |

|---|---|---|---|

| Relational DBs | PostgreSQL, MySQL, SQL Server | OLTP with strong ACID guarantees | 0–500 per node/month depending on vendor and size |

| Wide-column/Columnar | Cassandra, ClickHouse | Large-scale analytics and time-series | 0–200 per node/month for open-source; managed services vary |

| Streaming | Kafka, Pulsar | Event-driven pipelines with at-least-once or exactly-once semantics | 0–100 per broker per month, plus hosting costs |

| Object storage | S3, Azure Blob, GCS | Durable storage of large datasets | monthly storage per TB; egress varies by provider |

Where this leads you

Readers will find clear, actionable guidance grounded in examples from real systems. We aim to help you pick a data model, design robust pipelines, and enforce governance without compromising speed. The content here is designed for engineers who want to translate theory into reliable, observable, and scalable data platforms.

Data & Databases

Query optimization strategies for wide column stores

What makes wide column stores tick is not just how much data they hold, but how quickly they can sift through it. This piece examines how indexing, partiti…

Cache invalidation strategies for web scale apps

As web-scale applications span continents and serve billions of requests per day, cache invalidation remains a stubborn bottleneck that can make or break p…

Evaluating database isolation levels for modern workloads

This piece evaluates how database isolation levels perform under mixed read/write workloads with varying persistence guarantees, a question that has grown …